- Research

- Open access

- Published:

Generalizations of Hölder inequalities for Csiszar’s f-divergence

Journal of Inequalities and Applications volume 2013, Article number: 151 (2013)

Abstract

In this paper, we establish some new generalizations of the Hölder’s inequality involving Csiszar’s f-divergence of two probability measures. Some related inequalities are also presented.

MSC:26D15, 28A25, 60E15.

1 Introduction

Let , assume that and are continuous real-valued functions on . Then

-

(1)

for , we have the following Hölder inequality (see [1]):

(1.1) -

(2)

for , we have the following reverse Hölder inequality (see [2]):

(1.2)

The above inequalities play an important role in many areas of pure and applied mathematics. A large number of generalizations, refinements, variations and applications of (1.1) and (1.2) have been investigated in the literature (see [3–11] and references therein). Recently, G.A. Anastassiou [12] established some Hölder’s type inequalities regarding Csiszar’s f-divergence of two probability measures as follows.

Theorem 1.1 (see [12])

Let such that . Then

Theorem 1.2 (see [12])

Let , , . Then

which is a generalization of Theorem 1.1.

It follows the counterpart of Theorem 1.1.

Theorem 1.3 (see [12])

Let and such that , we assume that a.e. . Then we have

The aim of this paper is to give new generalizations of inequalities (1.4) and (1.5). Some related inequalities are also considered. The paper is organized as follows. In Section 2, we recall some basic facts about the Csiszar’s f-divergence of two probability measures. In Section 3, we will give the main result and its proof.

2 Preliminaries

Assume that is an arbitrary convex function which is strictly convex at 1. As in Csiszar [12, 13], we agree with the following expressions:

Suppose that is an arbitrary measure space with λ being a finite or σ-finite measure. Let , be probability measures on X such that (absolutely continuous).

The Radon-Nikodym derivatives (densities) of with respect to λ is expressed by :

Definition 2.1 (see [13])

The f-divergence of the probability measures and is defined as follows:

where the function f is named the base function. From Lemma 1.1 of [13], is always well-defined and with equality only for . From [13], we know that does not depend on the choice of λ. If , then can be considered as the most general measure of difference between probability measures. For arbitrary convex function f, we notice that .

The Csiszar’s f-divergence incorporated most of special cases of probability measure distances, including the variation distance, -divergence, information for discrimination or generalized entropy, information gain, mutual information, mean square contingency, etc. has many applications to almost all applied sciences where stochastics enters. For more references, one can see [12–22].

In this paper, we assume that the base function f appearing in the function have all the above properties of f.

3 Main results

In the section, we establish some new generalizations of the Hölder inequality involving Csiszar’s f-divergence of two probability measures.

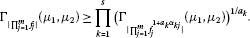

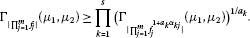

Theorem 3.1 Let , (), , . Then

Proof Here, we use the generalized Hölder’s inequality (see [23]). We obtain

Hence, we get the desired inequality. □

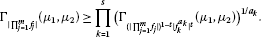

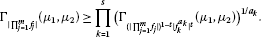

Theorem 3.2 Let (, ), , . Then

-

(1)

for , we have the following inequality:

(3.3)

(3.3) -

(2)

for , (), we have the following reverse inequality:

(3.4)

(3.4)

Proof

-

(1)

Set

(3.5)

Applying the assumptions and , we have

That is,

Then we find

By the inequality (1.4), we obtain

In view of (3.5), we have

By (3.6), (3.7) and (3.8), we obtain inequality (3.3).

-

(2)

Similar to the proof of inequality (3.3), by (3.5), (3.6), (3.8) and the inequality (3.1), we have inequality (3.4) immediately. □

Corrollary 3.1 Under the assumptions of Theorem 3.2, taking , for and with  , then we have

, then we have

-

(1)

for , we have the following inequality:

(3.9)

(3.9) -

(2)

for , (), we have the following reverse inequality:

(3.10)

(3.10)

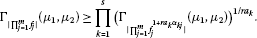

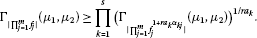

Theorem 3.3 Let  (, ), , . Then

(, ), , . Then

-

(1)

for , we have the following inequality:

(3.11)

(3.11) -

(2)

for , (), we have the following reverse inequality:

(3.12)

(3.12)

Proof (1) Since and , we get . Then by (3.3), we immediately obtain the inequality (3.11).

-

(2)

Since , and , we have , by (3.4), we immediately have the inequality (3.12). This completes the proof. □

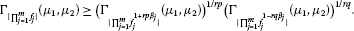

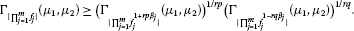

Theorem 3.4 Under the assumptions of Theorem 3.3, and let , , , , then

-

(1)

for , we have the following inequality:

(3.13)

(3.13) -

(2)

for , we have the following reverse inequality:

(3.14)

(3.14)

Proof (1) By inequality (1.3), we get

-

(2)

Similar to the proof of inequality (3.13), by inequality (1.5), we obtain inequality (3.14). □

Remark Assume that X is a finite or countable discrete set, A is its power set and λ has mass 1 for each , then becomes a finite or infinite sum, respectively. As a consequence, all the above obtained integral inequalities are discretized and become summation inequalities.

References

Mitrinović DS: Analytic Inequalities. Springer, New York; 1970.

Kuang J: Applied Inequalities. Shandong Science Press, Jinan; 2003.

Hardy G, Littlewood JE, Pólya G: Inequalities. 2nd edition. Cambridge University Press, Cambridge; 1952.

Yang X: A generalization of Hölder inequality. J. Math. Anal. Appl. 2000, 247: 328–330.

Yang X: Refinement of Hölder inequality and application to Ostrowski inequality. Appl. Math. Comput. 2003, 138: 455–461.

Yang X: A note on Hölder inequality. Appl. Math. Comput. 2003, 134: 319–322.

Yang X: Hölder’s inequality. Appl. Math. Lett. 2003, 16: 897–903.

Wu S, Debnath L: Generalizations of Aczél’s inequality and Popoviciu’s inequality. Indian J. Pure Appl. Math. 2005, 36(2):49–62.

He WS: Generalization of a sharp Hölder’s inequality and its application. J. Math. Anal. Appl. 2007, 332: 741–750.

Wu S: A new sharpened and generalized version of Hölder’s inequality and its applications. Appl. Math. Comput. 2008, 197: 708–714.

Kwon EG, Bae EK: On a continuous form of Hölder inequality. J. Math. Anal. Appl. 2008, 343: 585–592.

Anastassiou GA: Hölder-like Csiszar’s type inequalities. Int. J. Pure Appl. Math. 2004, 1: 9–14. www.gbspublisher.com

Csiszar I: Information-type measures of difference of probability distributions and indirect observations. Studia Sci. Math. Hung. 1967, 2: 299–318.

Anastassiou GA, et al.: Basic optimal approximation of Csiszar’s f -divergence. In Proceedings of 11th Internat. Conf. Approx. Th Edited by: Chui CK. 2004, 15–23.

Anastassiou GA: Fractional and other approximation of Csiszar’s f -divergence. Rend. Circ. Mat. Palermo Suppl. 2005, 76: 197–212.

Anastassiou GA: Representations and estimates to Csiszar’s f -divergence. Panam. Math. J. 2006, 16: 83–106.

Anastassiou GA: Higher order optimal approximation of Csiszar’s f -divergence. Nonlinear Anal. 2005, 61: 309–339.

Csiszar I: Eine Informationstheoretische Ungleichung und ihre Anwendung auf den Beweis der Ergodizität von Markoffschen Ketten. Magy. Tud. Akad. Mat. Kut. Intéz. Közl. 1963, 8: 85–108.

Csiszar I: On topological properties of f -divergences. Studia Sci. Math. Hung. 1967, 2: 329–339.

Dragomir SS: Inequalities for Csiszar f-Divergence in Information Theory. Victoria University, Melbourne; 2000. Edited monograph. On line: http://rgmia.vu.edu.au

Anwar M, Hussain S, Pečarić J: Some inequalities for Csiszár-divergence measures. Int. J. Math. Anal. 2009, 3(26):1295–1304.

Kafka P, Österreicher F, Vincze I: On powers of f -divergences defining a distance. Studia Sci. Math. Hung. 1991, 26(4):415–422.

Cheung W-S: Genegralizations of Hölder’s inequality. Int. J. Math. Math. Sci. 2001, 26(1):7–10.

Acknowledgements

Dedicated to Professor Hari M Srivastava.

The authors thank the editor and the referees for their valuable suggestions to improve the quality of this paper.

Author information

Authors and Affiliations

Corresponding author

Additional information

Competing interests

The authors declare that they have no competing interests.

Authors’ contributions

All the authors contributed to the writing of the present article. They also read and approved the final manuscript.

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution 2.0 International License (https://creativecommons.org/licenses/by/2.0), which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

About this article

Cite this article

Chen, GS., Shi, XJ. Generalizations of Hölder inequalities for Csiszar’s f-divergence. J Inequal Appl 2013, 151 (2013). https://doi.org/10.1186/1029-242X-2013-151

Received:

Accepted:

Published:

DOI: https://doi.org/10.1186/1029-242X-2013-151