- Research Article

- Open access

- Published:

On a Two-Step Algorithm for Hierarchical Fixed Point Problems and Variational Inequalities

Journal of Inequalities and Applications volume 2009, Article number: 208692 (2009)

Abstract

A common method in solving ill-posed problems is to substitute the original problem by a family of well-posed (i.e., with a unique solution) regularized problems. We will use this idea to define and study a two-step algorithm to solve hierarchical fixed point problems under different conditions on involved parameters.

1. Introduction and Preliminar Results

A common method in solving ill-posed problems is to substitute the original problem by a family of well-posed (i.e., with a unique solution) regularized problems. We will use this idea to define and study a two-step algorithm to solve hierarchical fixed point problems under different conditions on involved parameters. We will see that choosing appropriate hypotheses on the parameters, we will obtain convergence to the solution of well-posed problems. Changing these assumptions, we will obtain convergence to one of the solutions of a ill-posed problem. The results are situaded on the lines of research of Byrne [1], Yang and Zhao [2], Moudafi [3], and Yao and Liou [4].

In this paper, we consider variational inequalities of the form

where  are nonexpansive mappings such that the fixed points set of

are nonexpansive mappings such that the fixed points set of  (

( ) is nonempty and

) is nonempty and  is a nonempty closed convex subset of a Hilbert space

is a nonempty closed convex subset of a Hilbert space  . If we denote with

. If we denote with  the set of solutions of (1.1), it is evident that

the set of solutions of (1.1), it is evident that  .

.

Variational inequalities of (1.1) cover several topics recently investigated in literature as monotone inclusion ([5] and the references therein), convex optimization [6], quadratic minimization over fixed point set (see, e.g., [5, 7–10] and the references therein).

It is well known that the solutions of (1.1) are the fixed points of the nonexpansive mapping  .

.

There are in literature many papers in which iterative methods are defined in order to solve (1.1).

Recently, in [3] Moudafi defined the following explicit iterative algorithm

where  and

and  are two sequences in

are two sequences in  and he proved a weak-convergence's result. In order to obtain a strong-convergence result, Maingé and Moudafi in [11] introduced and studied the following iterative algorithm

and he proved a weak-convergence's result. In order to obtain a strong-convergence result, Maingé and Moudafi in [11] introduced and studied the following iterative algorithm

where  and

and  are two sequences in

are two sequences in  .

.

Let  be a contraction with coefficient

be a contraction with coefficient  In this paper, under different conditions on involved parameters, we study the algorithm

In this paper, under different conditions on involved parameters, we study the algorithm

and give some conditions which assure that the method converges to a solution which solves some variational inequality.

We will confront the two methods (1.3) and (1.4) later.

We recall some general results of the Hilbert spaces theory and of the monotone operators theory.

Lemma 1.1.

For all  , there holds the inequality

, there holds the inequality

If  is closed convex subset of a real Hilbert space

is closed convex subset of a real Hilbert space  , the metric projection

, the metric projection  is the mapping defined as follows: for each

is the mapping defined as follows: for each  ,

,  is the only point in

is the only point in  with the property

with the property

Lemma 1.2.

Let  be a nonempty closed convex subset of a real Hilbert space

be a nonempty closed convex subset of a real Hilbert space  and let

and let  be the metric projection from

be the metric projection from  onto

onto  . Given

. Given  and

and  ,

,  if and only if

if and only if

Lemma 1.3 (see [7]).

Let  be a contraction with coefficient

be a contraction with coefficient  and

and  be a nonexpansive mapping. Then, for all

be a nonexpansive mapping. Then, for all  :

:

(a)the mapping  is strongly monotone with coefficient

is strongly monotone with coefficient  , that is,

, that is,

(b)the mapping  is monotone, that is,

is monotone, that is,

Finally, we conclude this section with a lemma due to Xu on real sequences which has a fundamental role in the sequel.

Lemma 1.4 (see [9]).

Assume  is a sequence of nonnegative numbers such that

is a sequence of nonnegative numbers such that

where  is a sequence in

is a sequence in  and

and  is a sequence in

is a sequence in  such that,

such that,

(1)

(2) or

or

Then

2. Convergence of the Two-Step Iterative Algorithm

Let us consider the scheme

As we will see the convergence of the scheme depends on the choice of the parameters  and

and  . We list some possible hypotheses on them:

. We list some possible hypotheses on them:

(H1)there exists  such that

such that  ;

;

(H2) ;

;

(H3) as

as  and

and  ;

;

(H4) ;

;

(H5) ;

;

(H6) ;

;

(H7) ;

;

(H8) ;

;

(H9)there exists  such that

such that  .

.

Proposition 2.1.

Assume that (H1) holds. Then  and

and  are bounded.

are bounded.

Proof.

Let  . Then,

. Then,

So, by induction, one can see that

Of course  is bounded too.

is bounded too.

Proposition 2.2.

Suppose that (H1), (H3) hold. Also, assume that either (H4) and (H5) hold, or (H6) and (H7) hold. Then

(1) is asymptotically regular, that is,

is asymptotically regular, that is,

(2)the weak cluster points set  .

.

Proof.

Observing that

then, passing to the norm we have

By definition of  one obtain that

one obtain that

so, substituting (2.7) in (2.6) we obtain

By Proposition 2.1, we call  so we have

so we have

So, if (H4) and (H5) hold, we obtain the asymptotic regularity by Lemma 1.4.

If, instead, (H6) and (H7) hold, from (H1) we can write

so, the asymptotic regularity follows by Lemma 1.4 also.

In order to prove (2), we can observe that

By (H1), and (H3) it follows that  , as

, as  , so that

, so that  since

since  is asymptotically regular. By demiclosedness principle we obtain the thesis.

is asymptotically regular. By demiclosedness principle we obtain the thesis.

Corollary 2.3.

Suppose that the hypotheses of Proposition 2.2 hold. Then

(i) ;

;

(ii) ;

;

(iii) .

.

Proof.

To prove  we can observe that

we can observe that

The asymptotical regularity of  gives the claim.

gives the claim.

Moreover, noting that

since  as

as  we obtain

we obtain  . In the end

. In the end  follows easily by

follows easily by  and

and  .

.

Theorem 2.4.

Suppose (H2) with  and (H3). Moreover Suppose that either (H4) and (H5) hold, or (H6) and (H7) hold. If one denote by

and (H3). Moreover Suppose that either (H4) and (H5) hold, or (H6) and (H7) hold. If one denote by  the unique element in

the unique element in  such that

such that  , then

, then

-

(1)

(2.14)

(2.14)

(2) as

as  .

.

Proof.

First of all,  is a contraction, so there exists a unique

is a contraction, so there exists a unique  such that

such that  . Moreover, from Lemma 1.2,

. Moreover, from Lemma 1.2,  is characterized by the fact that

is characterized by the fact that

Since (H2) implies (H1), thus  is bounded. Let

is bounded. Let  be a subsequence of

be a subsequence of  such that

such that

and  . Thanks to either ((H4) and (H5)) or ((H6) and (H7)), by Proposition 2.2 it follows that

. Thanks to either ((H4) and (H5)) or ((H6) and (H7)), by Proposition 2.2 it follows that  . Then

. Then

Now we observe that, by Lemma 1.1

Since  then

then

Thus, by Lemma 1.4,  as

as  .

.

Theorem 2.5.

Suppose that (H2) with  , (H3), (H8), (H9) hold. Then

, (H3), (H8), (H9) hold. Then  , as

, as  , where

, where  is the unique solution of the variational inequality

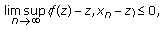

is the unique solution of the variational inequality

Proof.

First of all, we show that (2.20) cannot have more than one solution. Indeed, let  and

and  be two solutions. Then, since

be two solutions. Then, since  is solution, for

is solution, for  one has

one has

Analogously

Adding (2.21) and (2.22), we obtain

so  . Also now the condition (H2) with

. Also now the condition (H2) with  implies (H1) so the sequence

implies (H1) so the sequence  is bounded. Moreover, since (H8) implies (H6) and (H7), then

is bounded. Moreover, since (H8) implies (H6) and (H7), then  is asymptotically regular. Similarly, by Proposition 2.2, the weak cluster points set of

is asymptotically regular. Similarly, by Proposition 2.2, the weak cluster points set of  ,

,  , is a subset of

, is a subset of  . Now we have

. Now we have

so that

and denoting by  we have

we have

Dividing by  in (2.9), one observe that

in (2.9), one observe that

By Lemma 1.4, we have

so, also  is a null sequence as

is a null sequence as  . Fixing

. Fixing  , by (2.26) it results

, by (2.26) it results

By Lemma 1.3, we obtain that

Now, we observe that

so, since  and

and  , as

, as  , then every weak cluster point of

, then every weak cluster point of  is also a strong cluster point.

is also a strong cluster point.

By Proposition 2.2,  is bounded, thus there exists a subsequence

is bounded, thus there exists a subsequence  converging to

converging to  . For all

. For all  by (2.26)

by (2.26)

Passing to  we obtain

we obtain

which (2.20). Thus, since the (2.20) cannot have more than one solution, it follows that  and this, of course, ensures that

and this, of course, ensures that  , as

, as  .

.

Proposition 2.6.

Suppose that (H2) holds with  . Suppose that (H3), (H8) and (H9) hold. Moreover let

. Suppose that (H3), (H8) and (H9) hold. Moreover let  be bounded and

be bounded and  be a null sequence. Then every

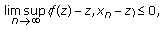

be a null sequence. Then every  is solution of the variational inequality

is solution of the variational inequality

that is,  .

.

Proof.

Since (H8) implies (H6) and (H7), by boundedness of  , we can obtain its asymptotical regularity as in proof of Proposition 2.2. Moreover, since

, we can obtain its asymptotical regularity as in proof of Proposition 2.2. Moreover, since  , as in proof of Proposition 2.2,

, as in proof of Proposition 2.2,  . With the same notation in proof of Theorem 2.5 we have that

. With the same notation in proof of Theorem 2.5 we have that

holds for all  . So, if

. So, if  and

and  , by (H2), the boundedness of

, by (H2), the boundedness of  ,

,  and

and  of Corollary 2.3 we have

of Corollary 2.3 we have

If we change  with

with  ,

,  , we have

, we have

Letting  finally

finally

Remark 2.7.

If we choose  and

and  (with

(with  ), since

), since  and

and  it is not difficult to prove that (H8) is satisfied for

it is not difficult to prove that (H8) is satisfied for  and (H9) is satisfied if

and (H9) is satisfied if  .

.

Remark 2.8.

It is clear that our algorithm (1.4) is different from (1.3). At the same time, our algorithm (1.4) includes some algorithms in the literature as special cases. For instance, if we take  in (1.4), then we get

in (1.4), then we get  which is well-known as the viscosity method studied by Moudafi [8] and Xu [10].

which is well-known as the viscosity method studied by Moudafi [8] and Xu [10].

Remark 2.9.

We do not know the rate of convergence of our method. Nevertheless, the rates of convergence of our method (1.4) that generates the sequence  and the Mainge-Moudafi method (1.3), seem not comparable. To see this, we consider three examples. In such examples we take

and the Mainge-Moudafi method (1.3), seem not comparable. To see this, we consider three examples. In such examples we take  ,

,  ,

,  ,

,  ,

,  .

.

In all three examples all the assumptions (that are the same of the Mainge-Moudafi method) are satisfied and the point at which both the sequences  and

and  converge is

converge is  .

.

Example 2.10.

Take  and

and  . Then

. Then

while

Now  , while, for

, while, for  , it results

, it results  . For instance, we report here some value

. For instance, we report here some value

However from the 64th iteration onward,  becomes quickly very exiguous with respect to

becomes quickly very exiguous with respect to  . For instance,

. For instance,  while

while  .

.

Example 2.11.

Take  . Then

. Then

while

that is the sequences  and

and  are interchanged with respect to the previous example. So this time

are interchanged with respect to the previous example. So this time  for

for  and

and  for

for  .

.

Example 2.12.

Take  ,

,  . Then

. Then

so this time  .

.

Reassuming, we cannot affirm that our method is more convenient or better than the Mainge-Moudafi method, but only that seems to us that it is the first time that it is introduced a two-step iterative approach to the VIP (1.1). In some case, our method approximates the solution more rapidly than Mainge-Moudafi method, in some other case it happens the contrary and in some other cases, both methods give the same sequence.

References

Byrne C: A unified treatment of some iterative algorithms in signal processing and image reconstruction. Inverse Problems 2004,20(1):103–120. 10.1088/0266-5611/20/1/006

Yang Q, Zhao J: Generalized KM theorems and their applications. Inverse Problems 2006,22(3):833–844. 10.1088/0266-5611/22/3/006

Moudafi A: Krasnoselski-Mann iteration for hierarchical fixed-point problems. Inverse Problems 2007,23(4):1635–1640. 10.1088/0266-5611/23/4/015

Yao Y, Liou Y-C: Weak and strong convergence of Krasnoselski-Mann iteration for hierarchical fixed point problems. Inverse Problems 2008,24(1):-8.

Yamada I: The hybrid steepest descent method for the variational inequality problem over the intersection of fixed point sets of nonexpansive mappings. In Inherently Parallel Algorithms in Feasibility and Optimization and Their Applications (Haifa, 2000), Studies in Computational Mathematics. Volume 8. North-Holland, Amsterdam, The Netherlands; 2001:473–504.

Solodov M: An explicit descent method for bilevel convex optimization. Journal of Convex Analysis 2007,14(2):227–237.

Marino G, Xu H-K: A general iterative method for nonexpansive mappings in Hilbert spaces. Journal of Mathematical Analysis and Applications 2006,318(1):43–52. 10.1016/j.jmaa.2005.05.028

Moudafi A: Viscosity approximation methods for fixed-points problems. Journal of Mathematical Analysis and Applications 2000,241(1):46–55. 10.1006/jmaa.1999.6615

Xu H-K: Iterative algorithms for nonlinear operators. Journal of the London Mathematical Society 2002,66(1):240–256. 10.1112/S0024610702003332

Xu H-K: Viscosity approximation methods for nonexpansive mappings. Journal of Mathematical Analysis and Applications 2004,298(1):279–291. 10.1016/j.jmaa.2004.04.059

Maingé P-E, Moudafi A: Strong convergence of an iterative method for hierarchical fixed-point problems. Pacific Journal of Optimization 2007,3(3):529–538.

Acknowledgment

The authors are extremely grateful to the anonymous referees for their useful comments and suggestions. This work was supported in part by Ministero dell'Universitá e della Ricerca of Italy.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution 2.0 International License ( https://creativecommons.org/licenses/by/2.0 ), which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

About this article

Cite this article

Cianciaruso, F., Marino, G., Muglia, L. et al. On a Two-Step Algorithm for Hierarchical Fixed Point Problems and Variational Inequalities. J Inequal Appl 2009, 208692 (2009). https://doi.org/10.1155/2009/208692

Received:

Accepted:

Published:

DOI: https://doi.org/10.1155/2009/208692