- Research Article

- Open access

- Published:

Lyapunov Stability of Quasilinear Implicit Dynamic Equations on Time Scales

Journal of Inequalities and Applications volume 2011, Article number: 979705 (2011)

Abstract

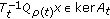

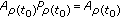

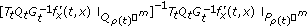

This paper studies the stability of the solution  for a class of quasilinear implicit dynamic equations on time scales of the form

for a class of quasilinear implicit dynamic equations on time scales of the form  . We deal with an index concept to study the solvability and use Lyapunov functions as a tool to approach the stability problem.

. We deal with an index concept to study the solvability and use Lyapunov functions as a tool to approach the stability problem.

1. Introduction

The stability theory of quasilinear differential-algebraic equations (DAEs for short)

with  being a given

being a given  -matrix function, has been an intensively discussed field in both theory and practice. This problem can be seen in many real problems, such as in electric circuits, chemical reactions, and vehicle systems. März in [1] has dealt with the question whether the zero-solution of (1.1) is asymptotically stable in the Lyapunov sense with

-matrix function, has been an intensively discussed field in both theory and practice. This problem can be seen in many real problems, such as in electric circuits, chemical reactions, and vehicle systems. März in [1] has dealt with the question whether the zero-solution of (1.1) is asymptotically stable in the Lyapunov sense with  , with

, with  being constant and small perturbation

being constant and small perturbation  .

.

Together with the theory of DAEs, there has been a great interest in singular difference equation (SDE) (also referred to as descriptor systems, implicit difference equations)

This model appears in many practical areas, such as the Leontiev dynamic model of multisector economy, the Leslie population growth model, and singular discrete optimal control problems. On the other hand, SDEs occur in a natural way of using discretization techniques for solving DAEs and partial differential-algebraic equations, and so forth, which have already attracted much attention from researchers (cf. [2–4]). When  , in [5], the authors considered the solvability of Cauchy problem for (1.2); the question of stability of the zero-solution of (1.2) has been considered in [6] where the nonlinear perturbation

, in [5], the authors considered the solvability of Cauchy problem for (1.2); the question of stability of the zero-solution of (1.2) has been considered in [6] where the nonlinear perturbation  is small and does not depend on

is small and does not depend on  .

.

Further, in recent years, to unify the presentation of continuous and discrete analysis, a new theory was born and is more and more extensively concerned, that is, the theory of the analysis on time scales. The most popular examples of time scales are  and

and  . Using "language" of time scales, we rewrite (1.1) and (1.2) under a unified form

. Using "language" of time scales, we rewrite (1.1) and (1.2) under a unified form

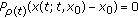

with  in time scale

in time scale  and

and  being the derivative operator on

being the derivative operator on  . When

. When  , (1.3) is (1.1); if

, (1.3) is (1.1); if  , we have a similar equation to (1.2) if it is rewritten under the form

, we have a similar equation to (1.2) if it is rewritten under the form  .

.

The purpose of this paper is to answer the question whether results of stability for (1.1) and (1.2) can be extended and unified for the implicit dynamic equations of the form (1.3). The main tool to study the stability of this implicit dynamic equation is a generalized direct Lyapunov method, and the results of this paper can be considered as a generalization of (1.1) and (1.2).

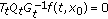

The organization of this paper is as follows. In Section 2, we present shortly some basic notions of the analysis on time scales and give the solvability of Cauchy problem for quasilinear implicit dynamic equations

with small perturbation  and for quasilinear implicit dynamic equations of the style

and for quasilinear implicit dynamic equations of the style

with the assumption of differentiability for  . The main results of this paper are established in Section 3 where we deal with the stability of (1.5). The technique we use in this section is somewhat similar to the one in [6–8]. However, we need some improvements because of the complicated structure of every time scale.

. The main results of this paper are established in Section 3 where we deal with the stability of (1.5). The technique we use in this section is somewhat similar to the one in [6–8]. However, we need some improvements because of the complicated structure of every time scale.

2. Nonlinear Implicit Dynamic Equations on Time Scales

2.1. Some Basic Notations of the Theory of the Analysis on Time Scales

A time scale is a nonempty closed subset of the real numbers  , and we usually denote it by the symbol

, and we usually denote it by the symbol  . We assume throughout that a time scale

. We assume throughout that a time scale  is endowed with the topology inherited from the real numbers with the standard topology. We define the forward jump operator and the backward jump operator

is endowed with the topology inherited from the real numbers with the standard topology. We define the forward jump operator and the backward jump operator by

by  (supplemented by

(supplemented by  ) and

) and  (supplemented by

(supplemented by  ). The graininess

). The graininess is given by

is given by  . A point

. A point  is said to be right-dense if

is said to be right-dense if  , right-scattered if

, right-scattered if  , left-dense if

, left-dense if  , left-scattered if

, left-scattered if  , and isolated if

, and isolated if  is right-scattered and left-scattered. For every

is right-scattered and left-scattered. For every  , by

, by  , we mean the set

, we mean the set  . The set

. The set  is defined to be

is defined to be  if

if  does not have a left-scattered maximum; otherwise, it is

does not have a left-scattered maximum; otherwise, it is  without this left-scattered maximum. Let

without this left-scattered maximum. Let  be a function defined on

be a function defined on  , valued in

, valued in  . We say that

. We say that  is delta differentiable (or simply: differentiable) at

is delta differentiable (or simply: differentiable) at  provided there exists a vector

provided there exists a vector  , called the derivative of

, called the derivative of  , such that for all

, such that for all  there is a neighborhood

there is a neighborhood  around

around  with

with  for all

for all  . If

. If  is differentiable for every

is differentiable for every  , then

, then  is said to be differentiable on

is said to be differentiable on . If

. If  , then delta derivative is

, then delta derivative is  from continuous calculus; if

from continuous calculus; if  , the delta derivative is the forward difference,

, the delta derivative is the forward difference,  , from discrete calculus. A function

, from discrete calculus. A function  defined on

defined on  is rd-continuous if it is continuous at every right-dense point and if the left-sided limit exists at every left-dense point. The set of all rd-continuous functions from

is rd-continuous if it is continuous at every right-dense point and if the left-sided limit exists at every left-dense point. The set of all rd-continuous functions from  to a Banach space

to a Banach space  is denoted by

is denoted by  . A matrix function

. A matrix function  from

from  to

to  is said to be regressive if

is said to be regressive if  for all

for all  , and denote

, and denote  the set of regressive functions from

the set of regressive functions from  to

to  . Moreover, denote

. Moreover, denote  the set of positively regressive functions from

the set of positively regressive functions from  to

to  , that is, the set

, that is, the set  .

.

Let  and let

and let  be a rd-continuous

be a rd-continuous  -matrix function and

-matrix function and  rd-continuous function. Then, for any

rd-continuous function. Then, for any  , the initial value problem (IVP)

, the initial value problem (IVP)

has a unique solution  defined on

defined on  . Further, if

. Further, if  is regressive, this solution exists on

is regressive, this solution exists on  .

.

The solution of the corresponding matrix-valued IVP  ,

,  always exists for

always exists for  , even

, even  is not regressive. In this case,

is not regressive. In this case,  is defined only with

is defined only with  (see [12, 13]) and is called the Cauchy operator of the dynamic equation (2.1). If we suppose further that

(see [12, 13]) and is called the Cauchy operator of the dynamic equation (2.1). If we suppose further that  is regressive, the Cauchy operator

is regressive, the Cauchy operator  is defined for all

is defined for all  .

.

We now recall the chain rule for multivariable functions on time scales, this result has been proved in [14]. Let  and

and  be continuously differentiable. Then

be continuously differentiable. Then  is delta differentiable and there holds

is delta differentiable and there holds

where  is the derivative (in the second variable of the function

is the derivative (in the second variable of the function  ) in normal meaning and

) in normal meaning and  is the scalar product.

is the scalar product.

We refer to [12, 15] for more information on the analysis on time scales.

2.2. Linear Equations with Small Nonlinear Perturbation

Let  be a time scale. We consider a class of nonlinear equations of the form

be a time scale. We consider a class of nonlinear equations of the form

The homogeneous linear implicit dynamic equations (LIDEs) associated to (2.3) are

where  and

and  is rd-continuous in

is rd-continuous in  . In the case where the matrices

. In the case where the matrices  are invertible for every

are invertible for every  , we can multiply both sides of (2.3) by

, we can multiply both sides of (2.3) by  to obtain an ordinary dynamic equation

to obtain an ordinary dynamic equation

which has been well studied. If there is at least a  such that

such that  is singular, we cannot solve explicitly the leading term

is singular, we cannot solve explicitly the leading term  . In fact, we are concerned with a so-called ill-posed problem where the solutions of Cauchy problem may exist only on a submanifold or even they do not exist. One of the ways to solve this equation is to impose some further assumptions stated under the form of indices of the equation.

. In fact, we are concerned with a so-called ill-posed problem where the solutions of Cauchy problem may exist only on a submanifold or even they do not exist. One of the ways to solve this equation is to impose some further assumptions stated under the form of indices of the equation.

We introduce the so-called index-1 of (2.4). Suppose that rank  for all

for all  and let

and let  such that

such that  is an isomorphism between

is an isomorphism between  and

and  ;

;  . Let

. Let  be a projector onto

be a projector onto  satisfying

satisfying  . We can find such operators

. We can find such operators  and

and  by the following way: let matrix

by the following way: let matrix  possess a singular value decomposition

possess a singular value decomposition

where  ,

,  are orthogonal matrices and

are orthogonal matrices and  is a diagonal matrix with singular values

is a diagonal matrix with singular values  on its main diagonal. Since

on its main diagonal. Since  , on the above decomposition of

, on the above decomposition of  , we can choose the matrix

, we can choose the matrix  to be in

to be in  (see [16]). Hence, by putting

(see [16]). Hence, by putting  and

and  , we obtain

, we obtain  and

and  as the requirement.

as the requirement.

Let

and  .

.

Under these notations, we have the following Lemma.

Lemma 2.2.

The following assertions are equivalent

(i) ;

;

(ii)the matrix  is nonsingular;

is nonsingular;

(iii) , for all

, for all  .

.

Proof.

(i) (ii) Let

(ii) Let  and

and  such that

such that  . This equation implies

. This equation implies  . Since

. Since  and

and  , it follows that

, it follows that  . Hence,

. Hence,  which implies

which implies  . This means that

. This means that  . Thus,

. Thus,  , that is, the matrix

, that is, the matrix  is nonsingular.

is nonsingular.

(ii) (iii) It is obvious that

(iii) It is obvious that  . We see that

. We see that  and

and  . Thus,

. Thus,  and we have

and we have  .

.

Let  , that is,

, that is,  and

and  . Since

. Since  , there is a

, there is a  such that

such that  and since

and since  ,

,  . Therefore,

. Therefore,  . Hence,

. Hence,  which follows that

which follows that  . Thus,

. Thus,  and then

and then  . So, we have that (iii). (iii)

. So, we have that (iii). (iii) (i) is obvious.

(i) is obvious.

Lemma 2.2 is proved.

Lemma 2.3.

Suppose that the matrix  is nonsingular. Then, there hold the following assertions:

is nonsingular. Then, there hold the following assertions:

Proof.

-

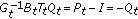

(1)

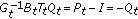

Noting that

, we get (2.8).

, we get (2.8). -

(2)

From

, it follows

, it follows  . Thus, we have (2.9).

. Thus, we have (2.9). -

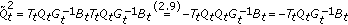

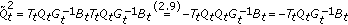

(3)

and

and  . This means that

. This means that  is a projector onto

is a projector onto  . From the proof of (iii), Lemma 2.2, we see that

. From the proof of (iii), Lemma 2.2, we see that  is the projector onto

is the projector onto  along

along  .

. -

(4)

Since

for any

for any  ,

,  (2.14)

(2.14)Therefore,

so we have (2.11). Finally,

so we have (2.11). Finally, (2.15)

(2.15)Thus, we get (2.12).

-

(5)

Let

be another linear transformation from

be another linear transformation from  onto

onto  satisfying

satisfying  to be an isomorphism from

to be an isomorphism from  onto

onto  and

and  a projector onto

a projector onto  . Denote

. Denote  . It is easy to see that

. It is easy to see that  (2.16)

(2.16)

Therefore,  . The proof of Lemma 2.3 is complete.

. The proof of Lemma 2.3 is complete.

Definition 2.4.

The LIDE (2.4) is said to be index-1 if for all  , the following conditions hold:

, the following conditions hold:

(i)rank  ,

,

(ii) ker .

.

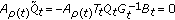

Now, we add the following assumptions.

Hypothesis 2.5.

-

(1)

The homogeneous LIDE (2.4) is of index-1.

-

(2)

is rd-continuous and satisfies the Lipschitz condition,

is rd-continuous and satisfies the Lipschitz condition,  (2.17)

(2.17)

where

Remark 2.6.

By the item (2.13) of Lemma 2.3, the condition (2.18) is independent from the choice of  and

and  .

.

We assume further that we can choose the projector function  onto

onto  such that

such that  for all right-dense and left-scattered

for all right-dense and left-scattered  ;

;  is differentiable at every

is differentiable at every  and

and  is rd-continuous. For each

is rd-continuous. For each  , we have

, we have  . Therefore,

. Therefore,

and the implicit equation (2.3) can be rewritten as

Thus, we should look for solutions of (2.3) from the space  :

:

Note that  does not depend on the choice of the projector function since the relations

does not depend on the choice of the projector function since the relations  and

and  are true for each two projectors

are true for each two projectors  and

and  along the space

along the space  .

.

We now describe shortly the decomposition technique for (2.3) as follows.

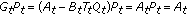

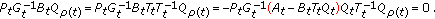

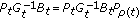

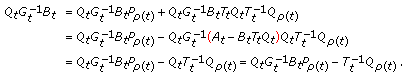

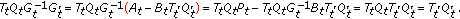

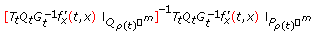

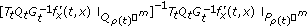

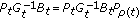

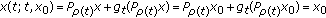

Since (2.3) has index-1 and by virtue of Lemma 2.2, we see that the matrices  are nonsingular for all

are nonsingular for all  . Multiplying (2.3) by

. Multiplying (2.3) by  and

and  , respectively, it yields

, respectively, it yields

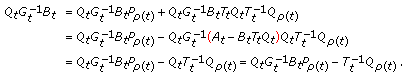

Therefore, by using the results of Lemma 2.3, we get

By denoting  , (2.23) becomes a dynamic equation on time scale

, (2.23) becomes a dynamic equation on time scale

and an algebraic relation

For fixed  and

and  , we consider a mapping

, we consider a mapping  given by

given by

We see that

for any  . Since

. Since  ,

,  is a contractive mapping. Hence, by the fixed point theorem, there exists a mapping

is a contractive mapping. Hence, by the fixed point theorem, there exists a mapping  satisfying

satisfying

and it is easy to see that  is rd-continuous in

is rd-continuous in  .

.

Moreover,

This deduces

Thus,  is Lipschitz continuous with the Lipschitz constant

is Lipschitz continuous with the Lipschitz constant  . Substituting

. Substituting  into (2.24), we obtain

into (2.24), we obtain

It is easy to see that the right-hand side of (2.31) satisfies the Lipschitz condition with the Lipschitz constant

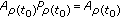

Applying the global existence theorem (see [12]), we see that (2.31), with the initial condition  has a unique solution

has a unique solution  .

.

Thus, we get the following theorem.

Theorem 2.7.

Let Hypothesis 2.5 and the assumptions on the projector  be satisfied. Then, (2.3) with the initial condition

be satisfied. Then, (2.3) with the initial condition

has a unique solution. This solution is expressed by

where  is the solution of (2.31) with

is the solution of (2.31) with  .

.

We now describe the solution space of the implicit dynamic equation (2.3). Denote

Lemma 2.8.

There hold the following statements:

(i) ,

,

(ii)If  for all

for all  then

then  .

.

Proof.

(i) Let  , that is,

, that is,  . We have

. We have

Hence,

From

it yields

Conversely, suppose that  , that is, there exists

, that is, there exists  such that

such that  . We have to prove

. We have to prove

or equivalently,

Indeed,

where we have already used a result of Lemma 2.3 that  is a projector onto

is a projector onto  . So

. So  .

.

(ii) Let  . Then

. Then  and

and  . Since

. Since  , we have

, we have  . This means that

. This means that  . From the assumption

. From the assumption  , it follows that

, it follows that  . The fact

. The fact  implies that

implies that  . Thus

. Thus  . The lemma is proved.

. The lemma is proved.

Remark 2.9.

-

(1)

By virtue of Lemma 2.8, we find out that the solution space

is independent from the choice of projector

is independent from the choice of projector  and operator

and operator  .

. -

(2)

Since

and

and  , the initial condition (2.33) is equivalent to the condition

, the initial condition (2.33) is equivalent to the condition . This implies that the initial condition is not also dependent on choice of projectors.

. This implies that the initial condition is not also dependent on choice of projectors. -

(3)

Noting that if

is a solution of (2.3) with the initial condition (2.33), then

is a solution of (2.3) with the initial condition (2.33), then  for all

for all  . Conversely, let

. Conversely, let  and let

and let  ,

,  , be the solution of (2.3) satisfying the initial condition

, be the solution of (2.3) satisfying the initial condition  . We see that

. We see that  . This means that there exists a solution of (2.3) passing

. This means that there exists a solution of (2.3) passing  .

.

2.3. Quasilinear Implicit Dynamic Equations

Now we consider a quasilinear implicit dynamic equation of the form

with  and

and  assumed to be continuously differentiable in the variable

assumed to be continuously differentiable in the variable  and continuous in

and continuous in  .

.

Suppose that rank  for all

for all  . We keep all assumptions on the projector

. We keep all assumptions on the projector  and operator

and operator  stated in Section 2.2.

stated in Section 2.2.

Equation (2.43) is said to be of index-1 if the matrix

is invertible for every  and

and  .

.

Denote

Further introduce the set

containing all solutions of (2.43). The subspace  manifests its geometrical meaning

manifests its geometrical meaning

where  is the tangent space of

is the tangent space of  at the point

at the point  .

.

Suppose that (2.43) is of index-1. Then, by Lemma 2.2, this condition is equivalent to one of the following conditions:

(1) ,

,

(2) .

.

(3)Let  be a matrix such that the matrix

be a matrix such that the matrix  is invertible (we can choose

is invertible (we can choose  , e.g.). From the relation

, e.g.). From the relation

it follows that

is invertible.

Lemma 2.10.

Suppose that the bounded linear operator triplet:  is given, where

is given, where  are Banach spaces. Then the operator

are Banach spaces. Then the operator  is invertible if and only if

is invertible if and only if  is invertible.

is invertible.

Proof .

See [17, Lemma 1].

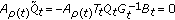

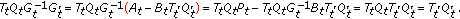

By virtue of (2.49) and Lemma 2.10, we get that

Now we come to split (2.43). Multiplying both sides of (2.43) by  and

and  , respectively, and putting

, respectively, and putting  , we obtain

, we obtain

Consider the function

We see that

where  .

.

Let  be a vector satisfying

be a vector satisfying  . Paying attention to

. Paying attention to  , we have

, we have

Therefore, by (2.50) we get  . This means that

. This means that  is an isomorphism of

is an isomorphism of  . By the implicit function theorem, equation

. By the implicit function theorem, equation  has a unique solution

has a unique solution  . Moreover, the function

. Moreover, the function  is continuous in

is continuous in  and continuously differentiable in

and continuously differentiable in  . Its derivative is

. Its derivative is

Then, by substituting  into the first equation of (2.51) we come to

into the first equation of (2.51) we come to

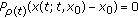

It is obvious that the ordinary dynamic equation (2.56) with the initial condition

is locally uniquely solvable and the solution  of (2.43) with the initial condition (2.33) can be expressed by

of (2.43) with the initial condition (2.33) can be expressed by  .

.

Now suppose further that  satisfies the Lipschitz condition in

satisfies the Lipschitz condition in  and we can find a matrix

and we can find a matrix  such that

such that

is bounded for all  and

and  . Then, the right-hand side of (2.56) also satisfies the Lipschitz condition. Thus, from the global existence theorem (see [12]), (2.56) with the initial condition (2.57) has a unique solution defined on

. Then, the right-hand side of (2.56) also satisfies the Lipschitz condition. Thus, from the global existence theorem (see [12]), (2.56) with the initial condition (2.57) has a unique solution defined on  .

.

Therefore, we have the following theorem.

Theorem 2.11.

Given an index-1 quasilinear implicit dynamic equation (2.43), then there holds the following.

-

(1)

Equation (2.43) is locally solvable, that is, for any

,

,  , there exists a unique solution

, there exists a unique solution  of (2.43), defined on

of (2.43), defined on  with some

with some  ,

,  , satisfying the initial condition (2.33).

, satisfying the initial condition (2.33). -

(2)

Moreover, if

satisfies the Lipschitz condition in

satisfies the Lipschitz condition in  and we can find a matrix

and we can find a matrix  such that

such that  (2.59)

(2.59)

is bounded, then this solution is defined on  and we have the expression

and we have the expression

where  is the solution of (2.56) with

is the solution of (2.56) with  .

.

Remark 2.12.

-

(1)

We note that the expression

depends only on choosing the matrix

depends only on choosing the matrix  .

. -

(2)

The assumption that

is bounded for a matrix function

is bounded for a matrix function  seems to be too strong. In Section 3, we show a condition for the global solvability via Lyapunov functions.

seems to be too strong. In Section 3, we show a condition for the global solvability via Lyapunov functions. -

(3)

If

, there exists

, there exists  satisfying

satisfying  . Hence,

. Hence,  . Therefore, by the same argument as in Section 2.2, we can prove that for every

. Therefore, by the same argument as in Section 2.2, we can prove that for every  , there is a unique solution passing through

, there is a unique solution passing through  .

.

3. Stability Theorems of Implicit Dynamic Equations

For the reason of our purpose, in this section we suppose that  is an upper unbounded time scale, that is,

is an upper unbounded time scale, that is,  . For a fixed

. For a fixed  , denote

, denote  .

.

Consider an implicit dynamic equation of the form

where  and

and  .

.

First, we suppose that for each  , (3.1) with the initial condition

, (3.1) with the initial condition

has a unique solution defined on  . The condition ensuring the existence of a unique solution can be refered to Section 2. We denote the solution with the initial condition (3.2) by

. The condition ensuring the existence of a unique solution can be refered to Section 2. We denote the solution with the initial condition (3.2) by  . Remember that we look for the solution of (3.1) in the space

. Remember that we look for the solution of (3.1) in the space  . Let

. Let  for all

for all  , which follows that (3.1) has the trivial solution

, which follows that (3.1) has the trivial solution  .

.

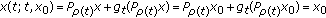

We mention again that  . Noting that if

. Noting that if  is the solution of (3.1) and (3.2) then

is the solution of (3.1) and (3.2) then  for all

for all  .

.

Definition 3.1.

The trivial solution  of (3.1) is said to be

of (3.1) is said to be

(1) -stable (resp.,

-stable (resp.,  -stable) if, for each

-stable) if, for each  and

and  , there exists a positive

, there exists a positive  such that

such that  (resp.,

(resp.,  ) implies

) implies  for all

for all  ,

,

(2) -uniformly (resp.,

-uniformly (resp.,  -uniformly) stable if it is

-uniformly) stable if it is  -stable (resp.,

-stable (resp.,  -stable) and the number

-stable) and the number  mentioned in the part (1). of this definition is independent of

mentioned in the part (1). of this definition is independent of  ,

,

(3) -asymptotically (resp.,

-asymptotically (resp.,  -asymptotically) stable if it is stable and for each

-asymptotically) stable if it is stable and for each  , there exist positive

, there exist positive  such that the inequality

such that the inequality  (resp.,

(resp.,  ) implies

) implies  . If

. If  is independent of

is independent of  , then the corresponding stability is

, then the corresponding stability is  -uniformly asymptotically (

-uniformly asymptotically ( -uniformly asymptotically) stable,

-uniformly asymptotically) stable,

(4) -uniformly globally asymptotically (resp.,

-uniformly globally asymptotically (resp.,  -uniformly globally asymptotically) stable if for any

-uniformly globally asymptotically) stable if for any  there exist functions

there exist functions  ,

,  such that

such that  (resp.,

(resp.,  ) implies

) implies  for all

for all  and if

and if  (resp.,

(resp.,  ) then

) then  for all

for all  ,

,

(5)P-exponentially stable if there is positive constant  with

with  such that for every

such that for every  there exists an

there exists an  , the solution of (3.1) with the initial condition

, the solution of (3.1) with the initial condition  satisfies

satisfies  . If the constant

. If the constant  can be chosen independent of

can be chosen independent of  , then this solution is called

, then this solution is called  -uniformly exponentially stable.

-uniformly exponentially stable.

Remark 3.2.

From  and

and  , the notions of

, the notions of  -stable and

-stable and  -stable as well as

-stable as well as  -asymptotically stable and

-asymptotically stable and  -asymptotically stable are equivalent. Therefore, in the following theorems we will omit the prefixes

-asymptotically stable are equivalent. Therefore, in the following theorems we will omit the prefixes  and

and  when talking about stability and asymptotical stability. However, the concept of

when talking about stability and asymptotical stability. However, the concept of  -uniform stability implies

-uniform stability implies  -uniform stability if the matrices

-uniform stability if the matrices  are uniformly bounded and

are uniformly bounded and  -uniform stability implies

-uniform stability implies  -uniform stability if the matrices

-uniform stability if the matrices  are uniformly bounded.

are uniformly bounded.

Denote

and  is the domain of definition of

is the domain of definition of  .

.

Proposition 3.3.

The trivial solution  of (3.1) is

of (3.1) is  -uniformly (resp.,

-uniformly (resp.,  -uniformly) stable if and only if there exists a function

-uniformly) stable if and only if there exists a function  such that for each

such that for each  and any solution

and any solution  of (3.1) the inequality

of (3.1) the inequality

holds, provided  (resp.,

(resp.,  ).

).

Proof.

We only need to prove the proposition for the  -uniformly stable case.

-uniformly stable case.

Sufficiency. Suppose there exists a function  satisfying (3.4) for each

satisfying (3.4) for each  ; we take

; we take  such that

such that  , that is,

, that is,  . If

. If  is an arbitrary solution of (3.1) and

is an arbitrary solution of (3.1) and  , then

, then  , for all

, for all  .

.

Necessity. Suppose that the trivial solution  of (3.1) is

of (3.1) is  -uniformly stable, that is, for each

-uniformly stable, that is, for each  there exists

there exists  such that for each

such that for each  the inequality

the inequality  implies

implies  , for all

, for all  . For the sake of simplicity in computation, we choose

. For the sake of simplicity in computation, we choose  . Denote

. Denote

It is clear that  is an increasing positive function in

is an increasing positive function in  . Further,

. Further,  and by definition, there holds

and by definition, there holds

By putting

it is seen that

Let  be the inverse function of

be the inverse function of  . It is clear that

. It is clear that  also belongs to

also belongs to  .

.

For  , we denote

, we denote  . If

. If  , then

, then  by

by  (remember that

(remember that  does not imply that

does not imply that  ). Consider the case where

). Consider the case where  . If

. If  , then by the relations (3.6) and (3.8) we have

, then by the relations (3.6) and (3.8) we have  . In particular,

. In particular,  which is a contradiction. Thus

which is a contradiction. Thus  , this implies

, this implies  , provided

, provided  .

.

The proposition is proved.

Similarly, we have the following proposition.

Proposition 3.4.

The trivial solution  of (3.1) is

of (3.1) is  -stable (resp.,

-stable (resp.,  -stable) if and only if for each

-stable) if and only if for each  and any solution

and any solution  of (3.1) there exists a function

of (3.1) there exists a function  such that there holds the following:

such that there holds the following:

provided  (resp.,

(resp.,  ).

).

In order to use the Lyapunov function technique related to (3.1), we suppose that  . By using (2.3), we can define the derivative of the function

. By using (2.3), we can define the derivative of the function  along every solution curve as follows:

along every solution curve as follows:

Remark 3.5.

Note that when the function  is independent of

is independent of  and even if the vector field associated with the implicit dynamic equation (3.1) is autonomous, the derivative

and even if the vector field associated with the implicit dynamic equation (3.1) is autonomous, the derivative  may depend on

may depend on  .

.

Theorem 3.6.

Assume that there exist a constant  and a function

and a function  being rd-continuous and a function

being rd-continuous and a function  ,

,  defined on

defined on  satisfying

satisfying

(1) for all

for all  and

and  ,

,

(2) , for any

, for any  and

and  .

.

Assume further that (3.1) is locally solvable. Then, (3.1) is globally solvable, that is, every solution with the initial condition (3.2) is defined on  .

.

Proof.

Denote

By the condition (2), we have

Therefore, for all

From the condition (1), it follows that

or

The last inequality says that the solution  can be lengthened on

can be lengthened on  , that is, (3.1) is globally solvable.

, that is, (3.1) is globally solvable.

Theorem 3.7.

Assume that there exist a function  being rd-continuous and a function

being rd-continuous and a function  ,

,  defined on

defined on  satisfying the conditions

satisfying the conditions

(1) for all

for all  ,

,

(2) for all

for all  and

and  ,

,

(3) for any

for any  and

and  .

.

Assume further that (3.1) is locally solvable. Then the trivial solution of (3.1) is stable.

Proof.

By virtue of Theorem 3.6 and the conditions (2) and (3), it follows that (3.1) is globally solvable. Suppose on the contrary that the trivial solution  of (3.1) is not stable. Then, there exists an

of (3.1) is not stable. Then, there exists an  such that for all

such that for all  there exists a solution

there exists a solution  of (3.1) satisfying

of (3.1) satisfying  and

and  for some

for some  . Put

. Put  .

.

By the assumption that  and

and  is rd-continuous, we can find

is rd-continuous, we can find  such that if

such that if  then

then  . With given

. With given  , let

, let  be a solution of (3.1) such that

be a solution of (3.1) such that  and

and  for some

for some  .

.

Since  and by the condition (3),

and by the condition (3),

Therefore,  . Further,

. Further,  and by the condition (2) we have

and by the condition (2) we have  . This is a contradiction. The theorem is proved.

. This is a contradiction. The theorem is proved.

Theorem 3.8.

Assume that there exist a function  being rd-continuous and functions

being rd-continuous and functions  ,

,  defined on

defined on  ,

,  such that

such that

satisfying the conditions

(1) uniformly in

uniformly in  ,

,

(2) for all

for all  and

and  ,

,

(3) for any

for any  and

and  .

.

Further, (3.1) is locally solvable. Then the trivial solution of (3.1) is asymptotically stable.

Proof.

Also from Theorem 3.6 and the conditions (2) and (3), it implies that (3.1) is globally solvable.

And since  , the trivial solution of (3.1) is stable by Theorem 3.7. Consider a bounded solution

, the trivial solution of (3.1) is stable by Theorem 3.7. Consider a bounded solution  of (3.1). First, we show that

of (3.1). First, we show that  Assume on the contrary that

Assume on the contrary that  . From the condition (1), it follows that

. From the condition (1), it follows that  By the condition (3), we have

By the condition (3), we have

as  , which gets a contradiction.

, which gets a contradiction.

Thus,  Further, from the condition (3) for any

Further, from the condition (3) for any  we get

we get

This means that  is a decreasing function. Consequently,

is a decreasing function. Consequently,

which follows that  by the condition (2).

by the condition (2).

Theorem 3.9.

Suppose that there exist a function  ,

,  defined on

defined on  , and a function

, and a function  such that

such that

(1) uniformly in

uniformly in  and

and  for all

for all  and

and  ,

,

(2) for any

for any  and

and  .

.

Assume further that (3.1) is locally solvable. Then, the trivial solution of (3.1) is  -uniformly stable.

-uniformly stable.

Proof.

The proof is similar to the one of Theorem 3.7 with a remark that since  uniformly in

uniformly in  , we can find

, we can find  such that if

such that if  then

then

The proof is complete.

Remark 3.10.

The conclusion of Theorem 3.9 is still true if the condition (1) is replaced by "there exist two functions ,

, defined on

defined on and a function

and a function such that

such that for all

for all and

and ".

".

We present a theorem of uniform global asymptotical stability.

Theorem 3.11.

If there exist functions  ,

,  defined on

defined on  , and a function

, and a function  satisfying

satisfying

(1) for all

for all  and

and  ,

,

(2) for any

for any  and

and  .

.

Assume further that (3.1) is locally solvable. Then, the trivial solution of (3.1) is  -uniformly globally asymptotically stable.

-uniformly globally asymptotically stable.

Proof.

Let  be given. Define

be given. Define  and

and

( is not necessary in

is not necessary in  ).

).

Let  be a solution of (3.1) with

be a solution of (3.1) with  . From the condition (2), we see that

. From the condition (2), we see that

Therefore,

Hence,  for all

for all  .

.

Because the trivial solution of (3.1) is  -uniformly stable, we only need to show that there exists a

-uniformly stable, we only need to show that there exists a  such that

such that  . Assume that such a

. Assume that such a  does not exist, that is

does not exist, that is  for all

for all  . From the condition (2), we get

. From the condition (2), we get

Since  ,

,

which contradicts the definition of  in (3.21). The proof is complete.

in (3.21). The proof is complete.

When  is not differentiable, one supposes that there exists a

is not differentiable, one supposes that there exists a  -differentiable projector

-differentiable projector  onto

onto  and

and  is rd-continuous on

is rd-continuous on  ; moreover,

; moreover,  for all

for all  . Let

. Let  .

.

We choose matrix functions  such that

such that  is an isomorphism between

is an isomorphism between  and

and  and the matrix

and the matrix  is invertible. Define

is invertible. Define

where  (see (2.51)).

(see (2.51)).

From now on we remain following the above assumptions on the operators  whenever

whenever  is mentioned.

is mentioned.

By the same argument as Theorem 3.6, we have the following theorem.

Theorem 3.12.

Assume that there exist a constant  and a function

and a function  being rd-continuous and a function

being rd-continuous and a function  ,

,  defined on

defined on  satisfying

satisfying

(1) for all

for all  and

and  ,

,

(2) , for any

, for any  and

and  .

.

Assume further that (3.1) is locally solvable. Then, (3.1) is globally solvable.

Theorem 3.13.

Assume that (3.1) is locally solvable. Then, the trivial solution  of (3.1) is stable if there exist a function

of (3.1) is stable if there exist a function  being rd-continuous and a function

being rd-continuous and a function  ,

,  defined on

defined on  such that

such that

(1) for all

for all  ,

,

(2) for all

for all  and

and  ,

,

(3) for all

for all  and

and  .

.

Proof.

Assume that there is a function  satisfying the assertions (1), (2), and (3) but the trivial solution

satisfying the assertions (1), (2), and (3) but the trivial solution  of (3.1) is not stable. Then, there exist a positive

of (3.1) is not stable. Then, there exist a positive  and a

and a  such that

such that  ; there exists a solution

; there exists a solution  of (3.1) satisfying

of (3.1) satisfying  and

and  , for some

, for some  . Let

. Let  . Since

. Since  , it is possible to find a

, it is possible to find a  satisfying

satisfying  when

when  . Consider the solution

. Consider the solution  satisfying

satisfying  and

and  for a

for a  .

.

From the assumption (3), it follows that

This implies

We get a contradiction because  when

when  .

.

The proof of the theorem is complete.

Theorem 3.14.

Assume that (3.1) is locally solvable. If there exist two functions  ,

,  defined on

defined on  and a function

and a function  being rd-continuous such that

being rd-continuous such that

(1) for all

for all  and

and  ,

,

(2) for all

for all  and

and  ,

,

then the trivial solution of (3.1) is  -uniformly stable.

-uniformly stable.

Proof.

The proof is similar to the one of Theorem 3.9.

Theorem 3.15.

If there exist functions  ,

,  defined on

defined on  and a function

and a function  satisfying

satisfying

(1) for all

for all  and

and  ,

,

(2) for any

for any  and

and  .

.

Assume further that (3.1) is locally solvable. Then, the trivial solution of (3.1) is  -uniformly globally asymptotically stable.

-uniformly globally asymptotically stable.

Proof.

Similarly to the proof of Theorem 3.11.

It is difficult to establish the inverse theorem for Theorems from 3.7 to 3.15, that is, if the trivial solution of (3.1) is stable, there exists a function  satisfying the assertions in the above theorems. However, if the structure of the time scale

satisfying the assertions in the above theorems. However, if the structure of the time scale  is rather simple we have the following theorem.

is rather simple we have the following theorem.

Theorem 3.16.

Suppose that  contains no right-dense points and the trivial solution

contains no right-dense points and the trivial solution  of (3.1) is

of (3.1) is  -uniformly stable. Then, there exists a function

-uniformly stable. Then, there exists a function  being rd-continuous satisfying the conditions (1), (2), and (3) of Theorem 3.13, where

being rd-continuous satisfying the conditions (1), (2), and (3) of Theorem 3.13, where  is an open neighborhood of 0 in

is an open neighborhood of 0 in  .

.

Proof.

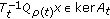

Suppose the trivial solution of (3.1) is  -uniformly stable. Due to Proposition 3.3, there exist functions

-uniformly stable. Due to Proposition 3.3, there exist functions  such that for any solution

such that for any solution  of (3.1), we have

of (3.1), we have

provided  .

.

Let  and

and  . For any

. For any  satisfying

satisfying  and

and  , we put

, we put

where  is the unique solution of (3.1) satisfying the initial condition

is the unique solution of (3.1) satisfying the initial condition  . It is seen that

. It is seen that  is defined for all

is defined for all  satisfying

satisfying  ,

,  , and

, and  .

.

Let  . By the definition,

. By the definition,  . From (2.60),

. From (2.60),  for all

for all  . In particular,

. In particular,  . Thus,

. Thus,  . Hence, we have the assertion (2) of the theorem.

. Hence, we have the assertion (2) of the theorem.

Due to the unique solvability of (3.1), we have  with

with  . Therefore,

. Therefore,  and

and

This implies

The proof is complete.

Now we give an example on using Lyapunov functions to test the stability of equations. The following result finds out that the stability of a linear equation will be ensured if nonlinear perturbations are sufficiently small Lipschitz.

Consider a nonlinear equation of the form (2.3)

where  and

and  are constant matrices with ind

are constant matrices with ind  ,

,  , and

, and  satisfing the Lipschitz condition

satisfing the Lipschitz condition

where  is sufficiently small. Let

is sufficiently small. Let  be defined by (2.9) with

be defined by (2.9) with  and

and  . By Theorem 2.7, we see that there exists a unique solution satisfying the condition

. By Theorem 2.7, we see that there exists a unique solution satisfying the condition  for any

for any  .

.

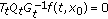

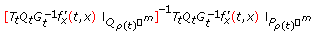

Besides, also consider the homogeneous equation associated to (3.33)

and suppose this equation has index-1. As in Section 2, multiplying (3.33) by  we get

we get

where  .

.

Note that the general solution of (3.35) is

in there  is denoted the set of right-scattered points of the interval

is denoted the set of right-scattered points of the interval  .

.

Denote  . It is easy to show that the trivial solution

. It is easy to show that the trivial solution  of (3.35) is

of (3.35) is  -uniformly exponentially stable if and only if

-uniformly exponentially stable if and only if  , where

, where  is the domain of uniform exponential stability of

is the domain of uniform exponential stability of  . On the exponential stable domain of a time scale, we can refer to [10, 18, 19]. By the definition of exponential stability, it implies that the graininess function of the time scale

. On the exponential stable domain of a time scale, we can refer to [10, 18, 19]. By the definition of exponential stability, it implies that the graininess function of the time scale  is upper bounded. Let

is upper bounded. Let  .

.

We denote the set

and suppose  . Since

. Since  , this condition implies that (3.35) is

, this condition implies that (3.35) is  -uniformly exponentially stable.

-uniformly exponentially stable.

If  , define

, define

where the matrix  is supposed to be symmetric positive definite. It is clear that

is supposed to be symmetric positive definite. It is clear that  is symmetric positive definite.

is symmetric positive definite.

Since  , the above series is convergent. Further, for any

, the above series is convergent. Further, for any  we have

we have

Thus,

Letting  and paying attention to

and paying attention to  , we obtain

, we obtain

In the case where  and

and  is symmetric positive definite, by putting

is symmetric positive definite, by putting

we can examine easily that the matrix  also satisfies (3.42),

also satisfies (3.42),  is symmetric and positive definite.

is symmetric and positive definite.

Theorem 3.17.

Suppose that  and the homogeneous equation (3.35) is of index-1 and the constant

and the homogeneous equation (3.35) is of index-1 and the constant  is sufficiently small. Then, the trivial solution

is sufficiently small. Then, the trivial solution  of (3.33) is

of (3.33) is  -uniformly globally asymptotically stable.

-uniformly globally asymptotically stable.

Proof.

Let  be a symmetric and positive definite (constant) matrix satisfying (3.42). Consider the Lyapunov function

be a symmetric and positive definite (constant) matrix satisfying (3.42). Consider the Lyapunov function  . The derivative of

. The derivative of  along the solution of (3.33) is

along the solution of (3.33) is

From the Lipschitz condition and (2.25), it is seen that  where

where  . Therefore,

. Therefore,

Combining this inequality and the above appreciation, we see that when  is sufficiently small there exists

is sufficiently small there exists  such that

such that

By Theorem 3.15, (3.33) is  -uniformly globally asymptotically stable.

-uniformly globally asymptotically stable.

Example 3.18.

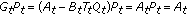

Let  and consider

and consider

with

We have  span

span , rank

, rank  for all

for all  . It is easy to verify that

. It is easy to verify that  is the canonical projector onto

is the canonical projector onto  ,

,  . Let us choose

. Let us choose  . We see that

. We see that

Since  ,

,  , (3.47) has index-1.

, (3.47) has index-1.

It is obvious that  . Further,

. Further,  . Thus, according to Theorem 2.7 for each

. Thus, according to Theorem 2.7 for each  , (3.47) with the initial condition

, (3.47) with the initial condition  has the unique solution.

has the unique solution.

It is easy to compute,  ,

,  ,

,  , where

, where  , and

, and  .

.

Therefore,  satisfies

satisfies  . Moreover, we have

. Moreover, we have

Let the Lyapunov function be  .

.

Put  , we have

, we have  and

and

Hence,

We have for any solution  of (3.47) and

of (3.47) and  (noting that

(noting that  ),

),

(+) if  is right-scattered then

is right-scattered then

,

,

(+)if  is right-dense then

is right-dense then  , where

, where  .

.

In both two cases, we have  , so the trivial solution of (3.47) is

, so the trivial solution of (3.47) is  -uniformly stable by Theorem 3.14.

-uniformly stable by Theorem 3.14.

Note that if we let  then the result is still true. Indeed, by the simple calculations we obtain

then the result is still true. Indeed, by the simple calculations we obtain

(a) , where

, where  defined by

defined by  ,

,

(b) . Thus,

. Thus,

Therefore, having the above result is obvious.

4. Conclusion

We have studied some criteria ensuring the stability for a class of quasilinear dynamic equations on time scales. So far, the inverse theorem of the theorems of the stability in Section 3 of this paper is still an open problem for an arbitrary time scale meanwhile it is true for discrete and continuous time scales.

References

März R: Criteria for the trivial solution of differential algebraic equations with small nonlinearities to be asymptotically stable. Journal of Mathematical Analysis and Applications 1998,225(2):587–607. 10.1006/jmaa.1998.6055

Campbell SL: Singular Systems of Differential Equations I, II, Research Notes in Mathematics. Volume 40. Pitman, London, UK; 1980:vii+176.

Griepentrog E, März R: Differential-Algebraic Equations and Their Numerical Treatment. Volume 88. Teubner, Leipzig, Germany; 1986:220.

Kunkel P, Mehrmann V: Differential-Algebraic Equations, EMS Textbooks in Mathematics. European Mathematical Society House, Zürich, Switzerland; 2006:viii+377.

Loi LC, Du NH, Anh PK: On linear implicit non-autonomous systems of difference equations. Journal of Difference Equations and Applications 2002,8(12):1085–1105. 10.1080/1023619021000053962

Anh PK, Hoang DS: Stability of a class of singular difference equations. International Journal of Difference Equations 2006,1(2):181–193.

Kloeden PE, Zmorzynska A: Lyapunov functions for linear nonautonomous dynamical equations on time scales. Advances in Difference Equations 2006, 2006: 1–10.

Lakshmikantham V, Sivasundaram S, Kaymakcalan B: Dynamic Systems on Measure Chains, Mathematics and its Applications. Volume 370. Kluwer Academic, Dordrecht, The Netherlands; 1996:x+285.

Advances in Dynamic Equations on Time Scales. Birkhäuse, Boston, Mass, USA; 2003:xii+348.

Bohner M, Stević S: Linear perturbations of a nonoscillatory second-order dynamic equation. Journal of Difference Equations and Applications 2009,15(11–12):1211–1221. 10.1080/10236190903022782

Bohner M, Stević S: Trench's perturbation theorem for dynamic equations. Discrete Dynamics in Nature and Society 2007, 2007:-11.

Hilger S: Analysis on measure chains—a unified approach to continuous and discrete calculus. Results in Mathematics 1990,18(1–2):18–56.

Pötzsche C: Exponential dichotomies of linear dynamic equations on measure chains under slowly varying coefficients. Journal of Mathematical Analysis and Applications 2004,289(1):317–335. 10.1016/j.jmaa.2003.09.063

Pötzsche C: Chain rule and invariance principle on measure chains. Journal of Computational and Applied Mathematics 2002,141(1–2):249–254. 10.1016/S0377-0427(01)00450-2

Pötzsche C: Analysis Auf Maßketten. Universität Augsburg; 2002.

Dai L: Singular Control Systems, Lecture Notes in Control and Information Sciences. Volume 118. Springer, Berlin, Germany; 1989:x+332.

Du NH, Linh VH: Stability radii for linear time-varying differential-algebraic equations with respect to dynamic perturbations. Journal of Differential Equations 2006,230(2):579–599. 10.1016/j.jde.2006.07.004

Doan TS, Kalauch A, Siegmund S, Wirth FR: Stability radii for positive linear time-invariant systems on time scales. Systems & Control Letters 2010,59(3–4):173–179. 10.1016/j.sysconle.2010.01.002

Pötzsche C, Siegmund S, Wirth F: A spectral characterization of exponential stability for linear time-invariant systems on time scales. Discrete and Continuous Dynamical Systems 2003,9(5):1223–1241.

Acknowledgment

This work was done under the support of NAFOSTED no 101.02.63.09.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution 2.0 International License (https://creativecommons.org/licenses/by/2.0), which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

About this article

Cite this article

Du, N., Liem, N., Chyan, C. et al. Lyapunov Stability of Quasilinear Implicit Dynamic Equations on Time Scales. J Inequal Appl 2011, 979705 (2011). https://doi.org/10.1155/2011/979705

Received:

Accepted:

Published:

DOI: https://doi.org/10.1155/2011/979705

, we get (2.8).

, we get (2.8). , it follows

, it follows  . Thus, we have (2.9).

. Thus, we have (2.9).

and

and  . This means that

. This means that  is a projector onto

is a projector onto  . From the proof of (iii), Lemma 2.2, we see that

. From the proof of (iii), Lemma 2.2, we see that  is the projector onto

is the projector onto  along

along  .

. for any

for any  ,

,

so we have (2.11). Finally,

so we have (2.11). Finally,

be another linear transformation from

be another linear transformation from  onto

onto  satisfying

satisfying  to be an isomorphism from

to be an isomorphism from  onto

onto  and

and  a projector onto

a projector onto  . Denote

. Denote  . It is easy to see that

. It is easy to see that

is rd-continuous and satisfies the Lipschitz condition,

is rd-continuous and satisfies the Lipschitz condition,

is independent from the choice of projector

is independent from the choice of projector  and operator

and operator  .

. and

and  , the initial condition (2.33) is equivalent to the condition

, the initial condition (2.33) is equivalent to the condition . This implies that the initial condition is not also dependent on choice of projectors.

. This implies that the initial condition is not also dependent on choice of projectors. is a solution of (2.3) with the initial condition (2.33), then

is a solution of (2.3) with the initial condition (2.33), then  for all

for all  . Conversely, let

. Conversely, let  and let

and let  ,

,  , be the solution of (2.3) satisfying the initial condition

, be the solution of (2.3) satisfying the initial condition  . We see that

. We see that  . This means that there exists a solution of (2.3) passing

. This means that there exists a solution of (2.3) passing  .

. ,

,  , there exists a unique solution

, there exists a unique solution  of (2.43), defined on

of (2.43), defined on  with some

with some  ,

,  , satisfying the initial condition (2.33).

, satisfying the initial condition (2.33). satisfies the Lipschitz condition in

satisfies the Lipschitz condition in  and we can find a matrix

and we can find a matrix  such that

such that

depends only on choosing the matrix

depends only on choosing the matrix  .

. is bounded for a matrix function

is bounded for a matrix function  seems to be too strong. In Section 3, we show a condition for the global solvability via Lyapunov functions.

seems to be too strong. In Section 3, we show a condition for the global solvability via Lyapunov functions. , there exists

, there exists  satisfying

satisfying  . Hence,

. Hence,  . Therefore, by the same argument as in Section 2.2, we can prove that for every

. Therefore, by the same argument as in Section 2.2, we can prove that for every  , there is a unique solution passing through

, there is a unique solution passing through  .

.