- Research Article

- Open access

- Published:

On Some Improvements of the Jensen Inequality with Some Applications

Journal of Inequalities and Applications volume 2009, Article number: 323615 (2009)

Abstract

An improvement of the Jensen inequality for convex and monotone function is given as well as various applications for mean. Similar results for related inequalities of the Jensen type are also obtained. Also some applications of the Cauchy mean and the Jensen inequality are discussed.

1. Introduction

The well-known Jensen's inequality for convex function is given as follows.

Theorem 1.1.

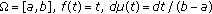

If  is a probability space and if

is a probability space and if  is such that

is such that  for all

for all  ,

,

is valid for any convex function  . In the case when

. In the case when  is strictly convex on

is strictly convex on  one has equality in (1.1) if and only if

one has equality in (1.1) if and only if  is constant almost everywhere on

is constant almost everywhere on  .

.

Here and in the whole paper we suppose that all integrals exist. By considering the difference of (1.1) for functional in [1] Anwar and Pečarić proved an interesting result of log-convexity. We can define this result for integrals as follows.

Theorem 1.2.

Let  be a probability space and

be a probability space and  is such that

is such that  for all

for all  . Define

. Define

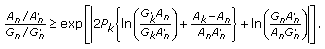

and let  be positive. Then

be positive. Then  is log-convex, that is, for

is log-convex, that is, for  the following is valid

the following is valid

The following improvement of (1.1) was obtained in [2].

Theorem 1.3.

Let the conditions of Theorem 1.1 be fulfilled. Then

where  represents the right-hand derivative of

represents the right-hand derivative of  and

and

If  is concave, then left-hand side of (1.4) should be

is concave, then left-hand side of (1.4) should be  .

.

In this paper, we give another proof and extension of Theorem 1.2 as well as improvements of Theorem 1.3 for monotone convex function with some applications. Also we give applications of the Jensen inequality for divergence measures in information theory and related Cauchy means.

2. Another Proof and Extension of Theorem 1.2

In fact, Theorem 1.2 for  and

and  was first of all initiated by Simić in [3].

was first of all initiated by Simić in [3].

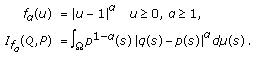

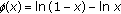

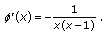

Moreover, in his proof, he has used convex functions defined on  (see [3, Theorem 1]). In his proof, he has used the following function:

(see [3, Theorem 1]). In his proof, he has used the following function:

where  and

and  are real with

are real with  .

.

In [1] we have given correct proof by using extension of (2.1), so that it is defined on  .

.

Moreover, we can give another proof so that we use only (2.1) but without using convexity as in [3].

Proof of Theorem 1.2.

Consider the function  defined, as in [3], by (2.1).

defined, as in [3], by (2.1).

Now

that is,  is convex. By using (1.1) we get

is convex. By using (1.1) we get

Therefore, (2.3) is valid for all  . Now since left-hand side of (2.3) is quadratic form, by the nonnegativity of it, one has

. Now since left-hand side of (2.3) is quadratic form, by the nonnegativity of it, one has

Since we have  and

and  , we also have that (2.4) is valid for

, we also have that (2.4) is valid for  . So

. So  is log-convex function in the Jensen sense on

is log-convex function in the Jensen sense on  .

.

Moreover, continuity of  implies log-convexity, that is, the following is valid for

implies log-convexity, that is, the following is valid for  :

:

Let us note that it was used in [4] to get corresponding Cauchy's means. Moreover, we can extend the above result.

Theorem 2.1.

Let the conditions of Theorem 1.2 be fulfilled and let  be real numbers. Then

be real numbers. Then

where  define the determinant of order

define the determinant of order  with elements

with elements  and

and  .

.

Proof.

Consider the function

for  and

and  and

and  .

.

So, it holds that

So  is convex function, and as a consequence of (1.1), one has

is convex function, and as a consequence of (1.1), one has

Therefore,  (

( denote the

denote the  matrix with elements

matrix with elements  ) is nonnegative semi definite and (2.6) is valid for

) is nonnegative semi definite and (2.6) is valid for  . Moreover, since we have continuity of

. Moreover, since we have continuity of  for all

for all  , (2.6) is valid for all

, (2.6) is valid for all  .

.

Remark 2.2.

In Theorem 2.1, if we set  we get Theorem 1.2.

we get Theorem 1.2.

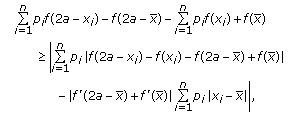

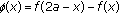

3. Improvements of the Jensen Inequality for Monotone Convex Function

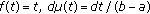

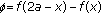

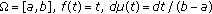

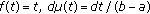

In this section and in the following section, we denote  and

and  .

.

Theorem 3.1.

If  is a probability space and if

is a probability space and if  is such

is such  for

for  and if

and if for

for  (

( is measurable, i.e.,

is measurable, i.e.,  ),

),  then

then

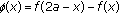

where

for monotone convex function  . If

. If  is monotone concave, then the left-hand side of (3.1) should be

is monotone concave, then the left-hand side of (3.1) should be  .

.

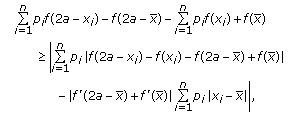

Proof.

Consider the case when  is nondecreasing on

is nondecreasing on  . Then

. Then

Similarly,

Now from (1.4), (3.3), and (3.4) we get (3.1).

The case when  is nonincreasing can be treated in a similar way.

is nonincreasing can be treated in a similar way.

Of course a discrete inequality is a simple consequence of Theorem 3.1.

Theorem 3.2.

Let  be a monotone convex function,

be a monotone convex function,  . If

. If  for

for  , then

, then

If  is monotone concave, then the left-hand side of (3.5) should be

is monotone concave, then the left-hand side of (3.5) should be

The following improvement of the Hermite-Hadamard inequality is valid [5].

Corollary 3.3.

Let  be a differentiable convex. Then

be a differentiable convex. Then

(i)the inequality

holds. If  is differentiable concave, then the left-hand side of (3.7) should be

is differentiable concave, then the left-hand side of (3.7) should be

(ii)if is monotone, then the inequality

is monotone, then the inequality

holds. If  is differentiable and monotone concave then the left-hand side of (3.8) should be

is differentiable and monotone concave then the left-hand side of (3.8) should be  .

.

Proof.

-

(i)

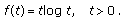

Setting

in (1.4), we get (3.7).

in (1.4), we get (3.7). -

(ii)

Setting

, and

, and  in (3.1), we get (3.8).

in (3.1), we get (3.8).

4. Improvements of the Levinson Inequality

Theorem 4.1.

If the third derivative of  exist and is nonnegative, then for

exist and is nonnegative, then for  and

and  one has

one has

-

(i)

(4.1)

(4.1)

(ii)if  is monotone and

is monotone and  for

for  , then

, then

Proof.

-

(i)

As for

-convex function

-convex function  the function

the function  is convex on

is convex on  , so by setting

, so by setting  in the discrete case of [2, Theorem 2], we get (4.1).

in the discrete case of [2, Theorem 2], we get (4.1). -

(ii)

As

is monotone convex, so by setting

is monotone convex, so by setting  in (3.5), we get (5.16).

in (3.5), we get (5.16).

Ky Fan Inequality

Let  be such that

be such that  . We denote

. We denote  and

and  , the weighted geometric and arithmetic means, respectively, that is,

, the weighted geometric and arithmetic means, respectively, that is,

and also by  and

and  , the arithmetic and geometric means of

, the arithmetic and geometric means of  respectively, that is,

respectively, that is,

The following remarkable inequality, due to Ky Fan, is valid [6, page 5],

with equality sign if and only if  .

.

Inequality (4.5) has evoked the interest of several mathematicians and in numerous articles new proofs, extensions, refinements and various related results have been published [7].

The following improvement of Ky Fan inequality is valid [2].

Corollary 4.2.

Let  and

and  be as defined earlier. Then, the following inequalities are valid

be as defined earlier. Then, the following inequalities are valid

-

(i)

(4.6)

(4.6)

-

(ii)

(4.7)

(4.7)

Proof.

-

(i)

Setting

,

,  in (4.1), we get (4.6).

in (4.1), we get (4.6). -

(ii)

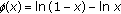

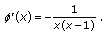

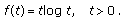

Consider

and

and  then

then  is strictly monotone convex on the interval

is strictly monotone convex on the interval  and has derivative

and has derivative  (4.8)

(4.8)

Then the application of inequality (4.2) to this function is given by

From (4.9) we get (4.7).

5. On Some Inequalities for Csiszár Divergence Measures

Let  be a measure space satisfying

be a measure space satisfying  and

and  a

a  -finite measure on

-finite measure on  with values in

with values in  . Let

. Let  be the set of all probability measures on the measurable space

be the set of all probability measures on the measurable space  which are absolutely continuous with respect to

which are absolutely continuous with respect to  . For

. For  , let

, let  and

and  denote the Radon-Nikodym derivatives of

denote the Radon-Nikodym derivatives of  and

and  with respect to

with respect to  respectively.

respectively.

Csiszár introduced the concept of  -divergence for a convex function,

-divergence for a convex function,  that is continuous at 0 as follows (cf. [8], see also [9]).

that is continuous at 0 as follows (cf. [8], see also [9]).

Definition 5.1.

Let  . Then

. Then

is called the  -divergence of the probability distributions

-divergence of the probability distributions  and

and  .

.

We give some important  -divergences, playing a significant role in Information Theory and statistics.

-divergences, playing a significant role in Information Theory and statistics.

-

(i)

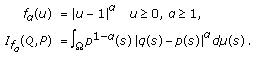

The class of

-divergences: the

-divergences: the  -divergences, in this class, are generated by the family of functions:

-divergences, in this class, are generated by the family of functions:  (5.2)

(5.2)

For  , it gives the total variation distance:

, it gives the total variation distance:

For  , it gives the Karl pearson

, it gives the Karl pearson  -divergence:

-divergence:

(ii)The  -order Renyi entropy: for

-order Renyi entropy: for  , let

, let

Then  gives

gives  -order entropy

-order entropy

-

(iii)

Harmonic distance: let

(5.7)

(5.7)

Then  gives Harmonic distance

gives Harmonic distance

-

(iv)

Kullback-Leibler: let

(5.9)

(5.9)

Then  -divergence functional gives rise to Kullback-Leibler distance [10]

-divergence functional gives rise to Kullback-Leibler distance [10]

The one parametric generalization of the Kullback-Leibler [10] relative information studied in a different way by Cressie and Read [11].

-

(v)

The Dichotomy class: this class is generated by the family of functions

,

,

This class gives, for particular values of  , some important divergences. For instance, for

, some important divergences. For instance, for  we have Hellinger distance and some other divergences for this class are given by

we have Hellinger distance and some other divergences for this class are given by

where  and

and  are positive integrable functions with

are positive integrable functions with

There are various other divergences in Information Theory and statistics such as Arimoto-type divergences, Matushita's divergence, Puri-Vincze divergences (cf. [12–14]) used in various problems in Information Theory and statistics. An application of Theorem 1.1 is the following result given by Csiszár and Körner (cf. [15]).

Theorem 5.2.

Let  be convex, and let

be convex, and let  and

and  be positive integrable function with

be positive integrable function with  . Then the following inequality is valid:

. Then the following inequality is valid:

where  .

.

Proof.

By substituting  and

and  in Theorem 1.1 we get (5.13).

in Theorem 1.1 we get (5.13).

Similar consequence of Theorems 1.2 and 2.1 in information theory for divergence measures discussed above is the following result.

Theorem 5.3.

Let  and

and  be positive integrable functions with

be positive integrable functions with  . Define the function

. Define the function

and let  be positive. Then

be positive. Then

(i)it holds that

where  define the determinant of order

define the determinant of order  with elements

with elements  and

and

(ii) is log-convex.

is log-convex.

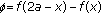

As we said in [4] we define new means of the Cauchy type, here we define an application of these means for divergence measures in the following definition.

Definition 5.4.

Let  and

and  be positive integrable functions with

be positive integrable functions with  . The mean

. The mean  is defined as

is defined as

where  and

and  ,

,

where  and

and  .

.

Theorem 5.5.

Let  be nonnegative reals such that

be nonnegative reals such that  then

then

Proof.

By using log convexity of  we get the following result for

we get the following result for  such that

such that  and

and

Also for  we consider limiting case and the result follows from continuity of

we consider limiting case and the result follows from continuity of  .

.

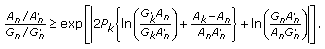

An application of Theorem 1.3 in divergence measure is the following result given in [16].

Theorem 5.6.

Let  be differentiable convex function on

be differentiable convex function on  , then

, then

where

Proof.

By substituting  and

and  in Theorem 1.3, we get (5.20).

in Theorem 1.3, we get (5.20).

Theorem 5.7.

Let  be differentiable monotone convex function on

be differentiable monotone convex function on  and let

and let  for

for

where

and  as in Theorem 5.7.

as in Theorem 5.7.

Proof.

By substituting  and

and  in Theorem 3.1(ii) we get (5.22).

in Theorem 3.1(ii) we get (5.22).

Corollary 5.8.

It holds that

where

and  as in Theorem 5.7.

as in Theorem 5.7.

Proof.

The proof follows by setting  in Theorem 5.7.

in Theorem 5.7.

Corollary 5.9.

Let  be as given in (5.11), then

be as given in (5.11), then

(i)for  one has

one has

(ii)for  one has

one has

(iii)for  one has

one has

where

and  as in Theorem 5.7.

as in Theorem 5.7.

Proof.

The proof follows be setting  to be as given in (5.11), in Theorem 3.1.

to be as given in (5.11), in Theorem 3.1.

References

Anwar M, Pečarić J: On logarithmic convexity for differences of power means and related results. Mathematical Inequalities & Applications 2009,12(1):81–90.

Hussain S, Pečarić J: An improvement of Jensen's inequality with some applications. Asian-European Journal of Mathematics 2009,2(1):85–94. 10.1142/S179355710900008X

Simić S: On logarithmic convexity for differences of power means. Journal of Inequalities and Applications 2007, 2007:-8.

Anwar M, Pečarić J: New means of Cauchy's type. Journal of Inequalities and Applications 2008, 2008: 10.

Dragomir SS, McAndrew A: Refinements of the Hermite-Hadamard inequality for convex functions. Journal of Inequalities in Pure and Applied Mathematics 2005,6(2, article 140):-6.

Alzer H: The inequality of Ky Fan and related results. Acta Applicandae Mathematicae 1995,38(3):305–354. 10.1007/BF00996150

Beckenbach EF, Bellman R: Inequalities, Ergebnisse der Mathematik und ihrer Grenzgebiete, N. F.. Volume 30. Springer, Berlin, Germany; 1961:xii+198.

Csiszár I: Information measures: a critical survey. In Transactions of the 7th Prague Conference on Information Theory, Statistical Decision Functions and the 8th European Meeting of Statisticians. Academia, Prague, Czech Republic; 1978:73–86.

Pardo MC, Vajda I: On asymptotic properties of information-theoretic divergences. IEEE Transactions on Information Theory 2003,49(7):1860–1868. 10.1109/TIT.2003.813509

Kullback S, Leibler RA: On information and sufficiency. Annals of Mathematical Statistics 1951, 22: 79–86. 10.1214/aoms/1177729694

Cressie P, Read TRC: Multinomial goodness-of-fit tests. Journal of the Royal Statistical Society. Series B 1984,46(3):440–464.

Kafka P, Österreicher F, Vincze I: On powers of -divergences defining a distance. Studia Scientiarum Mathematicarum Hungarica 1991,26(4):415–422.

Liese F, Vajda I: Convex Statistical Distances, Teubner Texts in Mathematics. Volume 95. BSB B. G. Teubner Verlagsgesellschaft, Leipzig, Germany; 1987:224.

Österreicher F, Vajda I: A new class of metric divergences on probability spaces and its applicability in statistics. Annals of the Institute of Statistical Mathematics 2003,55(3):639–653. 10.1007/BF02517812

Csiszár I, Körner J: Information Theory: Coding Theorems for Discrete Memoryless System, Probability and Mathematical Statistics. Academic Press, New York, NY, USA; 1981:xi+452.

Anwar M, Hussain S, Pečarić J: Some inequalities for Csiszár-divergence measures. International Journal of Mathematical Analysis 2009,3(26):1295–1304.

Acknowledgments

This research work is funded by the Higher Education Commission Pakistan. The research of the fourth author is supported by the Croatian Ministry of Science, Education and Sports under the Research Grants 117-1170889-0888.

Author information

Authors and Affiliations

Corresponding author

Rights and permissions

Open Access This article is distributed under the terms of the Creative Commons Attribution 2.0 International License (https://creativecommons.org/licenses/by/2.0), which permits unrestricted use, distribution, and reproduction in any medium, provided the original work is properly cited.

About this article

Cite this article

Adil Khan, M., Anwar, M., Jakšetić, J. et al. On Some Improvements of the Jensen Inequality with Some Applications. J Inequal Appl 2009, 323615 (2009). https://doi.org/10.1155/2009/323615

Received:

Accepted:

Published:

DOI: https://doi.org/10.1155/2009/323615

in (1.4), we get (3.7).

in (1.4), we get (3.7). , and

, and  in (3.1), we get (3.8).

in (3.1), we get (3.8).

-convex function

-convex function  the function

the function  is convex on

is convex on  , so by setting

, so by setting  in the discrete case of [

in the discrete case of [ is monotone convex, so by setting

is monotone convex, so by setting  in (3.5), we get (5.16).

in (3.5), we get (5.16).

,

,  in (4.1), we get (4.6).

in (4.1), we get (4.6). and

and  then

then  is strictly monotone convex on the interval

is strictly monotone convex on the interval  and has derivative

and has derivative

-divergences: the

-divergences: the  -divergences, in this class, are generated by the family of functions:

-divergences, in this class, are generated by the family of functions:

,

,